Sonification demo — Bending Energy algorithm output mapped to synthetic speech synthesizer.

Tag: design notes

DIGF5002 2017-03-23

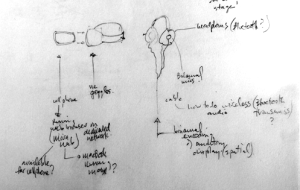

Early user experience design sketch (audio)

What this system could sound like as a imagine user walks down a busy city street while listening to music.

The detection system is housed by a cell phone application which is mounted onto a pair of the virtual-reality goggles.

As objects approach the listening user, the app applies real-time signal processing to the music that describes the shape of the object.

DIGF5002 2017-03-14

R2-D2 as a data sonification design prototype?

“R2-D2 was the most difficult non-human character to develop a voice for, Burtt says. He was a machine that was going to talk, act, and work opposite well-known actors. But he didn’t have a face or speak English. Initial voice tests for Artoo seemed to lack “a human quality.” After some trial and error, Burtt began imitating the sounds an infant might make, and he found that it worked: R2-D2 could convey emotion without speaking words. Thus, the idea was to combine mechanical and human sounds, and Burtt combined his voice with electronic sounds via a keyboard. It helped him understand how Artoo could inflect and, ultimately, deliver a performance.”

Burtt arrived at an integration of synthesized and human speech sounds, in part through an initial process of ‘vocal sketching’ — a useful technique for rapidly prototyping sound design concepts, such as for new devices, auditory displays or systems.

DIGF5002 2017-03-09

More sonification design and aesthetics.

Inspired by malleable properties of the human voice.

Auditory icons like synthetic chattering voices.

Inspired partly by Domino’s Pizza scooter alert signals again.

Sounds a person cannot help but pay attention to — other than crying babies and alarms.

Walking.

Busy street.

Fragments of conversations caught.

Analyzed.

Physiologically irresistible.

Brain tunes in.

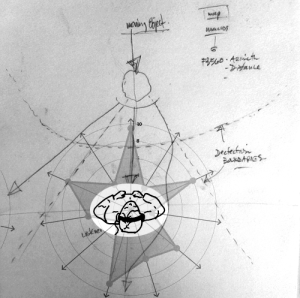

Detects changing proximity and shape factor.

Brain tunes out.

Minimal cognitive taxation.

DIGF5002 2017-03-02

Playful theatricality/

Inspired by Domino pizza delivery people in the Netherlands.

Sound design approach — less ‘stark’ (as in clinical and austere alert signals) … but more Starck (as in Philippe Starck, French designer). https://en.wikipedia.org/wiki/Philippe_Starck

DIGF5002 2017-02-24

Designing listening (‘choreographies of attention’) rather than designing sound.

Diagram of the hardware components of the user’s system:

• Pair of headphones wired up with a binaural microphone at each ear.

• Enables the user to monitor both external auditory environment while listening to prerecorded audio (music, podcasts, etc.)

• A cell phone app mixes the different signals automatically according to the detected presence and shape factor of objects that enter the user’s detection system.

DIGF5002 2017-02-16

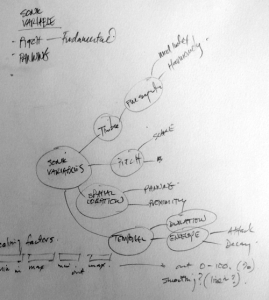

Mind mapping sonification variables for experimentation.

For next meeting — we will assemble a various of small moving objects to document on video for purposes of experimentation with sonification through computer vision.

Emphasis:

▪ tracking and estimating movement speed and trajectories of the objects,

▪ How to identify important (visual, mass, texture, weight) properties of a static object

▪ Identify #1 but while object is moving.

DIGF5002 2017-02-09

Ideation.

Track moving object as it moves towards the path of object detection system of visually impaired user. In which the listening sister boundaries once the moving object enters in to that within that boundary the listeners system will detect it and translate both its shape and its proximity to sound.